Discover the Perfect Product

Utilize our tool to identify the ideal onsemi product for your specific application with maximum efficiency.

Find Now

System Solution Guides

Delve deeper with our user-friendly system solution guides, invaluable resources crafted to elucidate onsemi's diverse product range across various applications.

Explore Guides

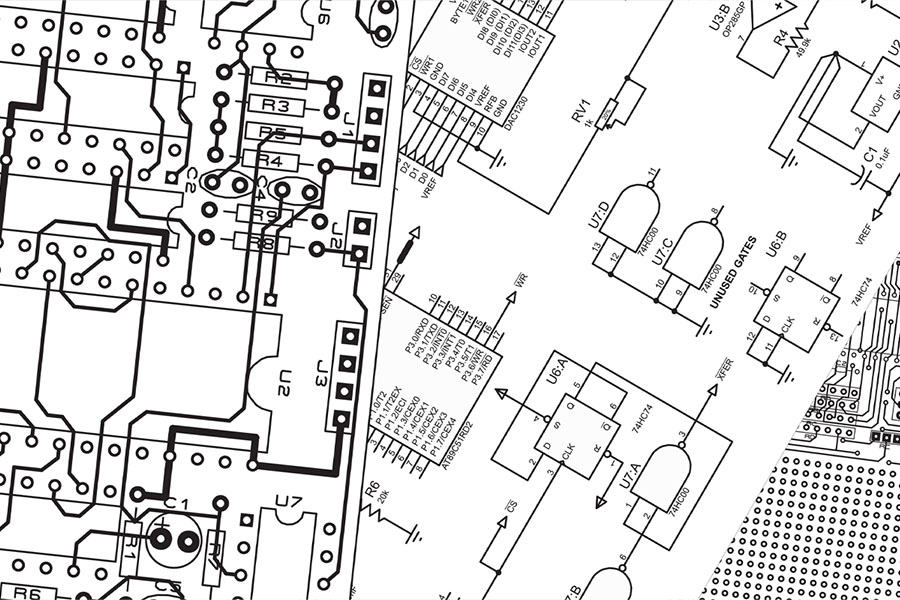

Interactive Block Diagrams

IBD provides a detailed and organized overview of the technology's design, relevant product information and specification.

Explore Diagrams

Talk to Sales

Have questions about our products and services, or need help with a design? Our sales support team is here to help!

Talk to Sales

Annual Sustainability Report 2022

Our 2022 sustainability report details our efforts concerning environmental, social and governance initiatives.

Read Report

#TransformYourFuture

Join a team where cutting-edge intelligent technologies enable world-leading innovators and shape the future.

Career at onsemi